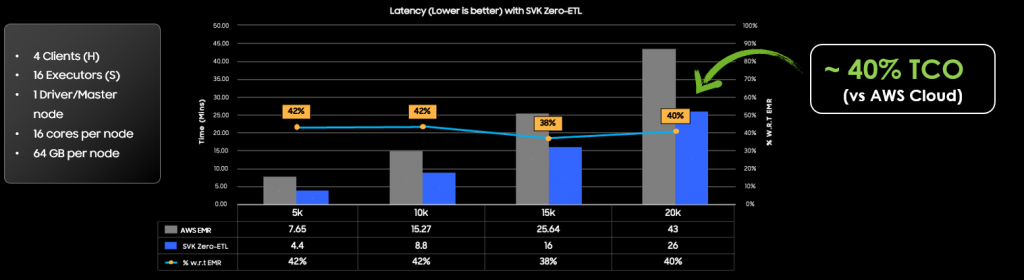

Zero ETL is one of the popular use cases in database world. It incurs multiple data transfer and copy overhead currently. SVK’s zero-ETL framework aims to reduce or eliminate the extra data transfer and copy in the ETL path by providing the easy interface to allow Data Modelers and AI engineers to perform data intensive tasks e.g. Synthetic Data Generation, ML calculation and Transformations near to the data source. This reduces the need of big data extract and load requirements enabling faster and TCO effective ETL Pipelines

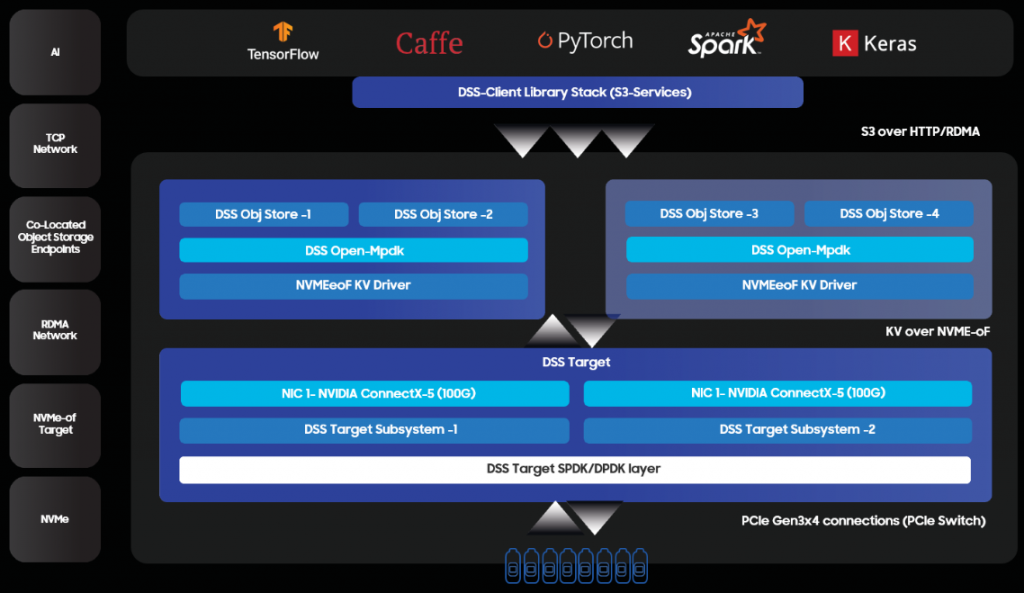

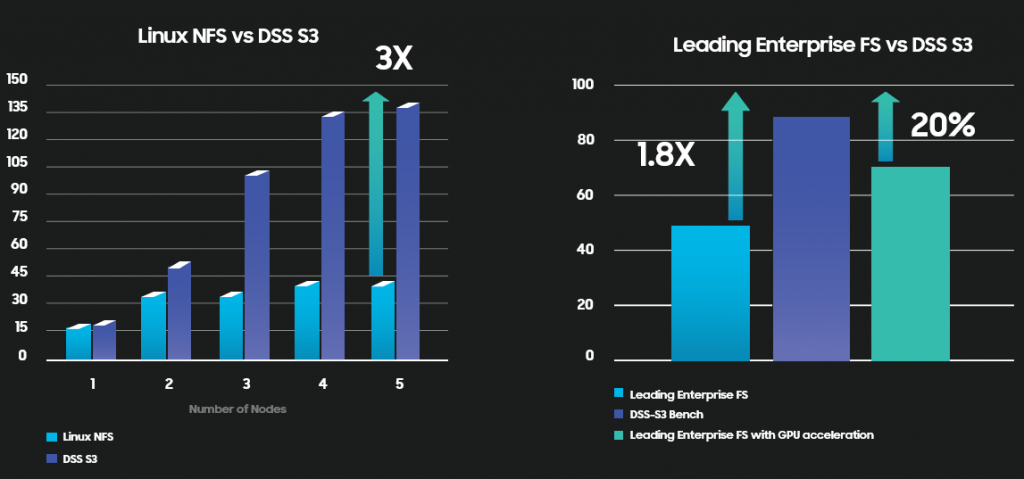

Samsung has developed DSS, a rack-scalable, very high read-bandwidth-optimized, Amazon S3-compatible object storage solution.

It utilizes a disaggregated architecture, enabling independent scaling of storage and compute. DSS is designed to make the most of system-level design, and Samsung’s best SSDs, to get the maximum performance while minimizing OPEX costs.

• Large-scale, high-throughput training

• Image Analytics

• Audio/Video AI

• Metaverse

• High throughput storage access- 3x better than NFS

• 20% better than GPU accelerated Leading Enterprise Filesystem

• True Scalable solution

• Lower TCO with less storage nodes serving more clients

Samsung, being the world leader of DRAM and Flash memory technologies, has recognized early on the potential for a CXL based solution architecture. Samsung heavily invested into building in-house technologies, know-how, and device solutions to fully enable the capabilities that CXL brings to the next-generation system architecture. These investments include the CXL Memory Module – DRAM (CMM-D) device, CXL Memory Module PMEM/Hybrid devices (CMM-H), and the solution level device CXL Memory Module – Memory Box (CMM-B). Additionally, Samsung has invested in a set of world-class firmware, drivers, APIs and management software to enable customers to quickly adopt these solutions that are aimed at reducing their total cost of ownership.

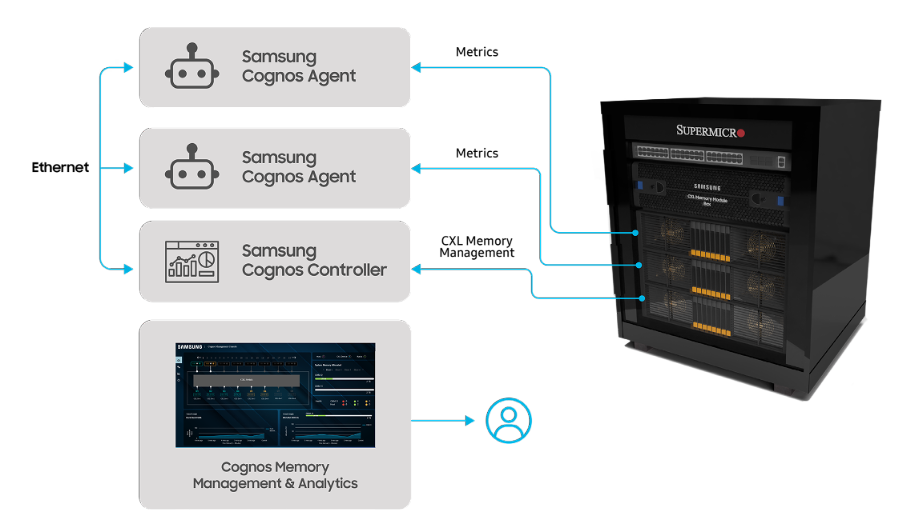

Samsung Cognos provides the glue that stitches all of the CXL-based technologies and devices into one homogeneous and integrated solution that developers and customers can easily adopt and use in their cloud or private data center solutions and services. Cognos includes a software developer kit that provides the foundational software components that are needed to quickly utilize the new CXL-based devices with customer applications and a host of management software, including a GUI to manage one-to-many server/switch nodes to handle rack-level cluster/system management. Samsung Cognos addresses memory stranding problems by pooling memory into a global resource that is shareable and reusable by resources across the data center. It provides a value-added software layer for Memory Box and CMM-D in the host to enable easier adoption by applications with easy-to-use interfaces.

Zero-ETL is an approach that aims to minimize or eliminate the traditional ETL processes that are typically used to extract data from source systems, transform it into the desired format, and then load it into a data warehouse or data lake for analysis. In the context of Zero ETL, the data is often accessed in real-time or near real-time, eliminating the need for batch processing. This approach is becoming more popular with the advent of technologies like Change Data Capture (CDC) and streaming data pipelines.

Optimizing Data Architecture with Samsung SVK & VMware Greenplum

Ivan Novick, Director, VMware & David McIntyre, Director, Samsung & Pramod Peethambaran, Director, Engineering, Samsung

About this talk :

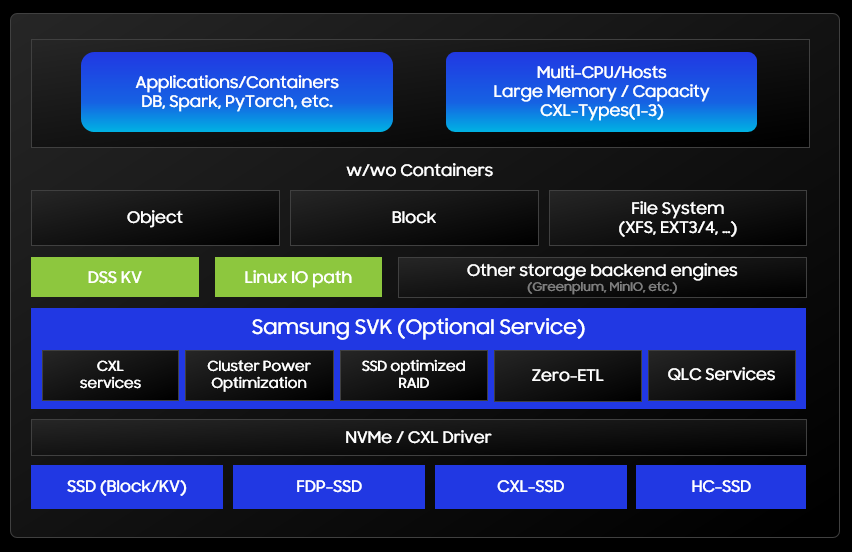

High Performance Object Storage (HPOS) stack enables disaggregated storage over multiple CMM-HC (Samsung’s computational storage drives). A data centric solution abstracting which computational function runs in which accelerator layer (i.e. CPU, GPU, XPU or SmartSSD) with ease of scale. Samsung’s HPOS solution provides reference solution for Video AI applications requiring in-storage video pre-processing. And for Data Analytics use case providing high performance for unstructured/object data in data lake.

Posted whitepaper in 2023 MemCon

Increasingly, as AI technology evolves into more sophisticated applications, training dataset sizes continue to grow exponentially. In order to scale storage and network infrastructure commensurately to deliver the data required and avoid unbearable training cycle times, there is a need for a new storage concept and innovative solution to address this technology gap. DSS Gen2 and beyond provides a potential next generation architecture and solution to alleviate this bottleneck.

Another issue that is major impediment to data center scaling is power utilization. And since one of the key storage consumer is data storage, DSS technology not only plan to optimize server power utilization but also partner and leverage Samsung’s new high capacity SSDs which has one of the highest density in the world.

2022 OCP Global Summit

With the advent of new application workloads related to Big Data including AI/ML, IoT, Video, security and many other machine generated data, there is a strong incentive for companies as well as governments to mine this treasure trove of data to extract value. Innovative companies taking on these storage challenges have tried storing Big Data into data lakes and moving them into locally attached storage for analysis but due to the sheer size of the data set this turns out to be too time consuming. Traditional network data storage also have been explored but overcoming inherent scaling and performance bottlenecks are difficult. DSS provides an innovative solution to the problem by designing purpose built storage that only targets these specific workloads.

Go back to Main Page

| Cookie | Duration | Description |

|---|---|---|

| cookielawinfo-checkbox-analytics | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Analytics". |

| cookielawinfo-checkbox-functional | 11 months | The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". |

| cookielawinfo-checkbox-necessary | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Necessary". |

| cookielawinfo-checkbox-others | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Other. |

| cookielawinfo-checkbox-performance | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Performance". |

| viewed_cookie_policy | 11 months | The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. It does not store any personal data. |